Get Started with Machine Learning Using Readily Available Hardware and Software

Contributed By DigiKey's North American Editors

2018-08-29

For developers, advances in hardware and software for machine learning (ML) promise to bring these sophisticated methods to Internet of Things (IoT) edge devices. As this field of research evolves, however, developers can easily find themselves immersed in the deep theory behind these techniques instead of focusing on currently available solutions to help them get an ML-based design to market.

To help designers get moving more quickly, this article briefly reviews the objectives and capabilities of ML, the ML development cycle, and the architecture of a basic fully connected neural network and a convolutional neural network (CNN). It then discusses the frameworks, libraries, and drivers that are enabling mainstream ML applications.

It concludes by showing how general purpose processors and FPGAs can serve as the hardware platform for implementing machine learning algorithms.

Introduction to ML

A subset of artificial intelligence (AI), ML encompasses a wide range of methods and algorithms. It has rapidly gained attention as a powerful technique for classifying data or finding patterns of interest in data streams. Broad classes of algorithms have emerged to address specific types of problems.

For example, clustering techniques and other unsupervised learning methods can reveal distinct classes of data in large datasets. Reinforcement learning provides methods capable of exploring unknown states and selecting alternative solutions with the goal of learning to recognize those states and responding appropriately in the future. Finally, supervised learning methods use prepared input data that represents desired output to teach an algorithm how to classify new input data.

Supervised learning methods earn their name from their use of carefully prepared training sets that pair input data (called a feature vector) with the expected output (called a label) to train an algorithmic model to classify unlabeled input data patterns in the future. For example, developers might have several feature vectors comprising different sets of sampled sensor values that all represent a safe condition in some industrial process, and other feature vectors with their own sensor samples that all represent an unsafe condition.

Supervised learning methods can use these representative feature vectors and their known safe/unsafe labels to train an algorithm to recognize other safe and unsafe conditions based on new sensor values.

Neural networks

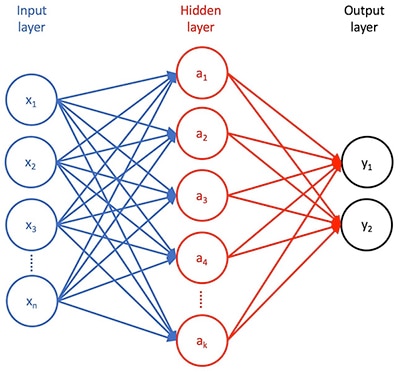

Among supervised learning methods, neural network algorithms have rapidly gained acceptance for their ability to accurately classify data. A basic neural network has three stages (Figure 1). The first is an input layer comprising inputs for each feature in the input feature vector. The second is a hidden layer of some number of neurons that transform those features in different ways. The third layer is an output layer that presents the results of that transformation as a set of probabilities that the input feature vector can be classified with one of the labels provided during training.

Figure 1: A neural network comprises an input layer, one or more hidden transformation layers, and output layer that presents the results of that transformation. (Image source: DigiKey)

In addition, each connection between neurons in one layer and neurons in the subsequent layer has an associated weight that effectively represents the relative strength of that particular connection.

In a fully connected neural network, each i input neuron presents its feature value xi, scaled by its weighting factor wij associated with each target neuron aj in the following hidden layer. Each hidden layer neuron aj sums the weighted inputs w1jx1+w2jx2+…+wnjxn (and some bias value), and then applies some activation function that scales or otherwise reduces the summed result presented to the neurons attached to its output. This process repeats through additional hidden layers and the final output layer, where that reduced value represents the probability that the input feature vector [x1,x2,…xn] can be classified as label y1 or y2 (for the simple network shown in Figure 1).

The training process works to refine the model to achieve the best possible match between the training set vectors and their associated labels by adjusting the weights and bias values, collectively termed the parameters of a model. Typically starting with some set of random model parameters, the training algorithm repeatedly passes the training data set through the model. With each complete pass, or epoch, the training algorithm attempts to reduce the difference between a predicted label and a known label – a difference calculated by some type of specific loss function at each epoch.

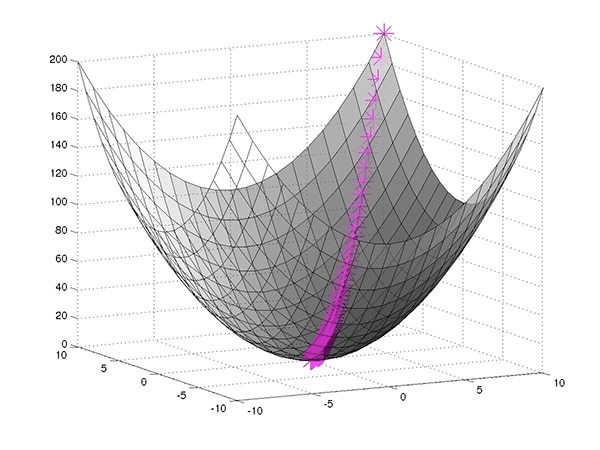

Represented as a function of the model parameters, the loss function describes a surface in the multidimensional space associated with those parameters. Thus, a well-tuned training process essentially works to find the quickest path from the starting point (the initial random model parameters) to the minimum point on the multidimensional parameter space (Figure 2).

Figure 2: Neural network training seeks to find the set of parameters that minimize the loss function (the difference between expected output and calculated output), using gradient descent to find the quickest path to the minimum loss point. (Image source: Mathworks)

On any curve, the direction and rate of change from any specific point to the minimum point is of course described by the slope of the line tangent to that point on the curve, that is, its derivative (or partial derivative in a multidimensional parameter space). For example, for some hypothetical parameter with value w and positive partial derivative p on the multidimensional surface, the parameter can be moved toward the minimum by setting w = w-αp, where α is a term, called the learning rate, used to help avoid the situation where p is so large that w-p alone could simply jump over the minimum and never converge.

Neural network training algorithms use this technique, called gradient descent, or its variations, to modify model parameters after computing the loss function at each epoch. By calculating the shortest path to the minimum loss at each epoch, the training algorithm can eventually find the specific model parameters that deliver the minimum loss, or one sufficiently close to the minimum loss that iterations through additional epochs would add little to the result.

Complex neural networks

This general training process applies conceptually to any neural network whether it resembles the basic architecture shown in Figure 1, adds many hidden layers in a deep neural network (DNN) design, or uses an entirely different architecture. Developers can find any number of neural network architectures designed to address specific applications. Among these, the CNN architecture has emerged as a preferred method for image recognition.

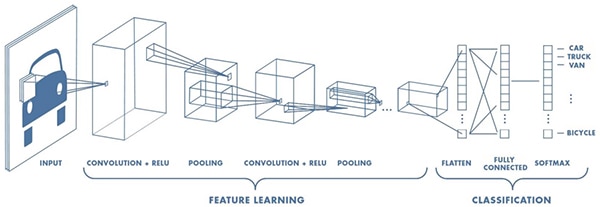

Designed for applications that require advanced recognition of images, handwriting, and other complex representations, CNNs use a pipeline approach that learns the important features within the presented input and classifies those features at its output (Figure 3).

Figure 3: A CNN combines a feature learning stage comprising transformations that filter an image through multiple receptive fields with a classification stage that recombines those results into a final output layer. (Image source: Mathworks)

A CNN begins with an input layer used to preprocess an image. The feature learning stage typically comprises multiple layers of convolution, rectified linear unit (ReLU), and pooling functions that combine to identify features such as edges, color groupings, and other distinctive elements of an image.

To perform this identification, the convolution layer examines the input volume of the image with multiple sets of neurons, called the depth column, that all connect to the same local region (their receptive field) in the input image and receive all of its color channels. To produce the convolution, this set of neurons, called a filter or kernel, slides its receptive field over the image. In the process, the kernel computes the same sort of weighted sum of inputs described previously. Similarly, the ReLU layer serves as the activation function described previously. The pooling layer provides a specialized function that effectively down samples the result received from the connected kernel.

The CNN's final classification stage reconnects all the individual kernel outputs and generates outputs that indicate the probability that the input image corresponds to one of the specific labels used during training with labeled images.

Developers can find specific examples of CNN architectures ranging from relatively shallow models such as the original LeNet, AlexNet, and CIFAR ConvNet, to larger models such as the 22-layer model GoogleNet architecture and very deep models with hundreds of layers often used in the annual ImageNet Large Scale Visual Recognition Competition. DNNs such as these are capable of dramatic non-linear transformations needed to extract features and classify complex images with very low error rates.

Until relatively recently, however, the ability to implement CNNs has required a deep understanding of the underlying mathematics for probability, statistics, and linear algebra, at a minimum. Today developers can take advantage of sophisticated machine learning frameworks built on software stacks that significantly simplify implementation of complex neural network architectures including CNNs.

ML frameworks

Machine learning frameworks such as MATLAB for Machine Learning, Microsite's Cognitive Toolkit, Google’s TensorFlow, Samsung's Veles, and many others provide resources required to design, train, and deploy a neural network model. Within these frameworks, developers use machine learning libraries such as Keras or TensorFlow Estimators to describe neural network layers and implement training algorithms. In turn, these libraries use optimized math libraries such as NumPy to handle complex matrix operations used for gradient descent and loss function calculations. For the specific numeric calculations required for these operations, these libraries build on lower level libraries such as Basic Linear Algebra Subroutines (BLAS). To speed training, these environments typically rely heavily on one or more graphics processing units (GPUs) with corresponding GPU-enabled libraries such as NumPy compatible CuPy, BLAS compatible cuBLAS, or NVIDIA's own CUDA Deep Neural Network library (cuDNN), among others. Finally, these various libraries draw on even lower level drivers including cross-platform OpenCL or NVIDIA CUDA for GPU-enabled environments.

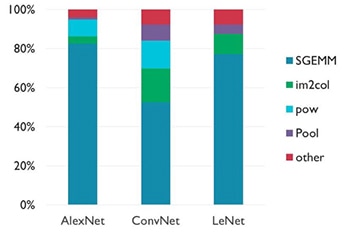

This deep reliance on libraries optimized for numeric math processing reflects the considerable role matrix operations play in neural network development. In particular, one operation, general matrix multiply (gemm), dominates the type of calculations used in neural networks in general and CNNs in particular (Figure 4).

Figure 4: Although the specifics of its impact varies with different CNN architectures, the single precision floating general matrix multiply (SGEMM) operation dominates calculations used in training and inference. (Image source: Arm®)

Given the preponderance of matrix operations, the capabilities of the underlying hardware play a central role in determining the training time for a neural network and the inference time for a completed model. In practical terms, this means that even a relatively low-level general purpose system such as a Raspberry Pi can be used for CNN training and inference if the target application has modest performance requirements. In fact, any system designed around general purpose processors such as Arm Cortex® MCUs can serve as an ML platform.

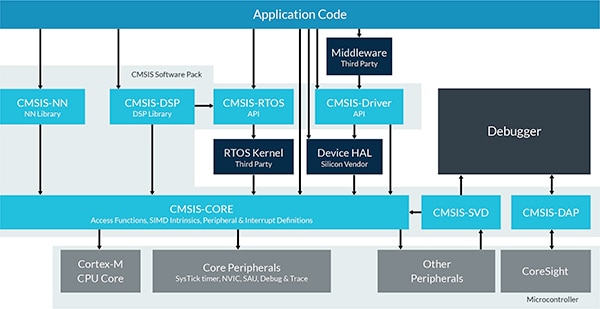

To help developers deploy neural networks on its Cortex MCUs, Arm provides its specialized Compute Library optimized for Arm Cortex-A series MCUs such as the Texas Instruments Sitara MCUs, NXP i.MX6 MCUs, and NXP i.MX8 MCUs. For Arm Cortex-M-based MCUs, Arm provides CMSIS-NN, a neural network specific library within its Cortex Microcontroller Software Interface Standard (CMSIS) for Arm Cortex-M-series devices (Figure 5). Designed as an add-on package for the Arm Cortex Microcontroller Software Interface Standard (CMSIS), the CMSIS-NN library augments CMSIS-CORE with optimized functions for convolution layers, pooling, activation (e.g.: ReLU), and other functions commonly used in neural network models.

Figure 5: The CMSIS-NN library augments CMSIS-CORE with optimized functions commonly used in neural network models. (Image source: Arm)

For example, using the CMSIS-NN library, developers can implement a model on an STMicroelectronics Mbed compatible NUCLEO-F746ZG development board, which uses an Arm Cortex-M7-based STM32F746ZG MCU.

Specialized AI chips will eventually provide significant performance enhancements for neural networks and other machine learning algorithms, but such chips remain largely in the planning stages while algorithms solidify.

Developers who need increased performance now can turn to readily available FPGAs such as the Intel Arria 10 GX, the Lattice Semiconductor iCE40 UltraPlus, or the Lattice ECP5. This class of FPGA integrates DSP blocks able to speed GEMM operations and has memory blocks embedded to reduce the memory access bottleneck that limits performance in these kinds of compute intensive operations.

Lattice Semiconductor takes FPGA-based models a step further with its SensAI stack by providing machine learning FPGA IP and a neural network compiler. Using SensAI, developers can implement advanced neural networks on available Lattice FPGA development platforms including the Lattice ICE40UP5K-MDP-EVN mobile development board, and the Lattice LF-EVDK1-EVN embedded vision development kit.

Although a faster hardware platform generally means faster training and inference times, limited resources in the target platform generally require a careful balance of inference time, latency, memory footprint, and power consumption. Machine learning experts have responded to these requirements with further refinements to each neural network architecture. Methods such as reduced bit quantization of both model parameters and activation functions have resulted in 3x to 4x reduction in memory footprint from earlier approaches. Further refinements continue to reduce model size and complexity toward faster calculations for shorter inference time, lower latency, and lower power consumption.

The combination of innovative model architectures, training methods, and specialized hardware continue to bring advanced machine learning methods closer to the edge and within the grasp of any developer.

Conclusion

Machine learning is emerging as a powerful solution for user recognition, object identification, and many other features desired in smart products. Although machine learning techniques were once limited to use by AI experts, the wide availability of machine learning frameworks has opened the door for broad application by mainstream developers.

Even as machine learning capabilities continue to evolve rapidly, developers can already begin using these frameworks with general purpose processors and FPGAs to employ machine learning in a broad array of applications.

Disclaimer: The opinions, beliefs, and viewpoints expressed by the various authors and/or forum participants on this website do not necessarily reflect the opinions, beliefs, and viewpoints of DigiKey or official policies of DigiKey.