Accelerate Industrial IoT Application Development—Part 1: Simulating IoT Device Data

Contributed By DigiKey's North American Editors

2020-03-04

Editor’s Note: Embedded application development projects are often delayed as developers wait for availability of hardware implementations of new devices. Industrial Internet of Things (IIoT) application development faces a similar bottleneck, waiting for the sensor data required for applications like industrial predictive maintenance systems or facility automation systems based on machine learning methods. This two-part series explores alternatives for providing early data streams needed to accelerate IIoT application development. Here, Part 1, describes the use of simulation methods for generating those data streams. Part 2 discusses options for rapid prototyping of sensor systems for generating data.

Large-scale Industrial Internet of Things (IIoT) applications present multiple challenges that can stall deployments and leave companies questioning the return on investment in the many resources required for implementation. To prevent such situations and help developers more quickly ascertain the benefits of IIoT deployment, ready access to data for deployment simulation is required.

Using simulation methods to generate realistic data streams, developers can begin IIoT application development well before IoT network deployment, and even refine the definition of the IIoT sensor network itself.

This article will show how the various IoT cloud platforms provide for data simulation and will introduce example gateways from Multi-Tech Systems Inc. that can further accelerate deployment.

The case for simulating IIoT data

The use of simulated data to drive applications and systems development is of course nothing new. Developers have used system-level simulation methods for decades to stress test computing infrastructures and connectivity services. These tests serve an important function in verifying robustness of static configurations. In cloud service platforms, these tests provide a relatively simple method to verify auto-scaling of virtual machines and other cloud resources.

IIoT applications share these same requirements and more. Besides aiding in load testing and auto-scaling, data simulation provides an important tool for verifying the integration of the many disparate services and resources needed to implement software as complex as an enterprise-level IIoT application. Beyond those more fundamental practices, data simulation can accelerate development of complex IIoT applications built on the sophisticated service platforms available from leading cloud providers.

Software perspective

IIoT applications operate on complex architectures that look significantly different to application software developers than to sensor and actuator system developers. To the latter, a large-scale IIoT architecture is a vast assembly of sensors and actuators that interface with the physical processes which are the subject of the overall application. To application software developers, an enterprise-level IIoT architecture comprises a number of services whose coordinated activity ultimately delivers the functionality of the application.

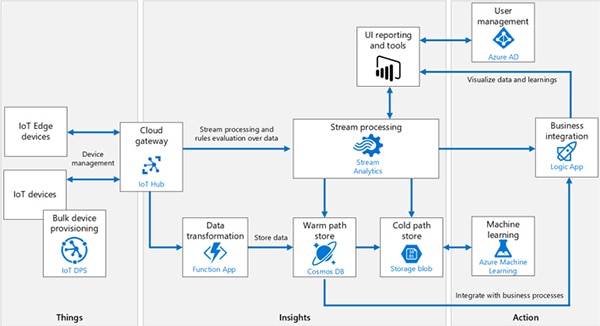

The Microsoft Azure IoT reference architecture offers a representative view of typical IIoT applications (and IoT applications in general) from the application software perspective. This view summarizes the multiple functional services that a typical application knits together in the cloud to deliver insights and actions based on data from the endpoint and edge devices in the periphery (Figure 1).

Figure 1: The Microsoft Azure IoT reference architecture illustrates the multiple types of cloud services and resources an IIoT application typically requires to deliver useful insights and action from data generated by device networks in the periphery. (Image source: Microsoft Corp.)

Figure 1: The Microsoft Azure IoT reference architecture illustrates the multiple types of cloud services and resources an IIoT application typically requires to deliver useful insights and action from data generated by device networks in the periphery. (Image source: Microsoft Corp.)

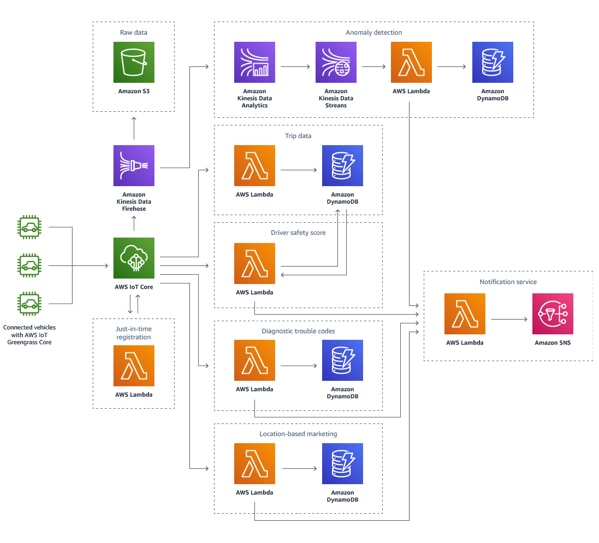

Specific application solutions deploy these cloud resources in appropriate combinations, functionally connected through standardized interchange mechanisms and coordinated by application logic. In its connected vehicle solution, for example, Amazon Web Services (AWS) suggests how cloud services might be mixed and matched in modules responsible for providing different features and capabilities of the application (Figure 2).

Figure 2: The AWS connected vehicle solution provides a representative view of a typical large-scale IoT application's orchestration of cloud services to deliver needed functional capabilities. (Image source: Amazon Web Services)

Figure 2: The AWS connected vehicle solution provides a representative view of a typical large-scale IoT application's orchestration of cloud services to deliver needed functional capabilities. (Image source: Amazon Web Services)

As these architectural representations suggest, the software development effort required to create an IIoT application is every bit as challenging and expansive as implementing peripheral networks of sensor and actuator systems. Few organizations can afford to delay development of this complex software until the device network is able to generate sufficient data. In fact, deployment of the device network might need to wait for further definition and refinement that can arise as analytics specialists and machine learning experts begin to work with application results. In the worst case, device network deployment and software development find themselves deadlocked: each dependent on results from the other.

Fortunately, the solution to this dilemma lies in the nature of IoT architectures. Beyond some broad similarity, cloud service architectures such as those illustrated above from Microsoft and AWS do of course differ in detail. Nevertheless, they all demonstrate a similar architectural feature typically found in IoT cloud platforms: A well-defined interface service module or layer functionality that separates the peripheral network of IoT devices from the cloud-based software application. Besides providing uniform connectivity, these interface services are vital for device management and security as well as other key capabilities required in large-scale IIoT applications.

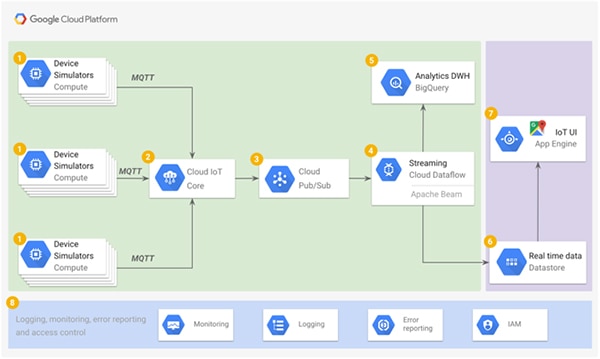

In the Microsoft Azure cloud, this interface service is called Azure IoT Hub (see Figure 1 again); in the AWS cloud, it's AWS IoT Core (see Figure 2 again). In the Google Cloud Platform, this interface is the Cloud IoT Core, and in IBM Cloud, it's the IBM Watson IoT Platform Service. Other platforms such as ThingWorx IoT Platform similarly connect through connectivity services such as ThingWorx Edge Microserver, ThingWorx Kepware Server, or protocol adapter toolkits. In short, any cloud platform needs to provide a consistent interface service that funnels data from the periphery to cloud services or risk a confused tangle of connections from peripheral devices directly to individual resources deep within the cloud.

Injecting simulated data

Using each IoT platform's software development kit (SDK), developers can inject simulated sensor data directly into the platform's interface service at the levels of volume, velocity and variety required to verify application functionality and performance. Simulated data generated at the desired rate and resolution reaches the interface service using standard protocols such as MQ Telemetry Transport (MQTT), Constrained Application Protocol (CoAP), and others. To the interface service (and downstream application software), the simulated data streams are indistinguishable from data acquired by a hardware sensor system. When device networks are ready to come online, their sensor data streams simply replace the simulated data streams reaching the interface service.

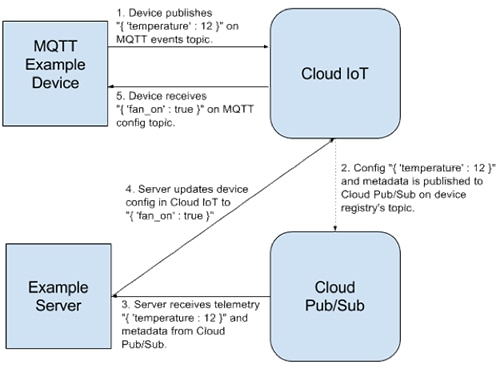

Cloud platform providers typically support this data simulation approach at different levels of capability. For example, Google demonstrates a simple simulation-driven application with a reference architecture and sample code that implements a simple control loop of a temperature-controlled fan. Like the architectures illustrated earlier, this architecture takes advantage of Google Cloud Platform services fed by the Google Cloud IoT Core service interface (Figure 3).

Figure 3: In any IoT cloud platform, device simulators use the same communications protocols used by physical devices to feed data to an interface service such as the Google Cloud IoT Core for the Google Cloud Platform application architecture shown here. (Image source: Google)

Figure 3: In any IoT cloud platform, device simulators use the same communications protocols used by physical devices to feed data to an interface service such as the Google Cloud IoT Core for the Google Cloud Platform application architecture shown here. (Image source: Google)

In this sample application, temperate sensor device simulators generate data at a selected update rate and pass the data to the Google Cloud IoT Core interface service using the MQTT messaging protocol. In turn, that interface service uses the platform's standard publish-subscribe (pub/sub) protocols to pass the data to a simulated server, which responds with a command to turn the fan on or off as needed (Figure 4).

Figure 4: A sample Google application demonstrates a basic control loop comprising a simulated device that sends data through the Google Cloud IoT Core to a simulated server using standard communications methods. (Image source: Google)

Figure 4: A sample Google application demonstrates a basic control loop comprising a simulated device that sends data through the Google Cloud IoT Core to a simulated server using standard communications methods. (Image source: Google)

Google provides sample Python code that implements this basic application. In this code, a Device class instance includes a method that updates the simulated temperature based on the state of the simulated fan. The main routine calls that method at a specified rate and sends the data using an MQTT connection service provided by the Eclipse paho-mqtt Python MQTT client module (Listing 1).

Copy

class Device(object):

"""Represents the state of a single device."""

def __init__(self):

self.temperature = 0

self.fan_on = False

self.connected = False

def update_sensor_data(self):

"""Pretend to read the device's sensor data.

If the fan is on, assume the temperature decreased one degree,

otherwise assume that it increased one degree.

"""

if self.fan_on:

self.temperature -= 1

else:

self.temperature += 1

.

.

.

def main():

.

.

.

device = Device()

client.on_connect = device.on_connect

client.on_publish = device.on_publish

client.on_disconnect = device.on_disconnect

client.on_subscribe = device.on_subscribe

client.on_message = device.on_message

client.connect(args.mqtt_bridge_hostname, args.mqtt_bridge_port)

client.loop_start()

# This is the topic that the device will publish telemetry events

# (temperature data) to.

mqtt_telemetry_topic = '/devices/{}/events'.format(args.device_id)

# This is the topic that the device will receive configuration updates on.

mqtt_config_topic = '/devices/{}/config'.format(args.device_id)

# Wait up to 5 seconds for the device to connect.

device.wait_for_connection(5)

# Subscribe to the config topic.

client.subscribe(mqtt_config_topic, qos=1)

# Update and publish temperature readings at a rate of one per second.

for _ in range(args.num_messages):

# In an actual device, this would read the device's sensors. Here,

# you update the temperature based on whether the fan is on.

device.update_sensor_data()

# Report the device's temperature to the server by serializing it

# as a JSON string.

payload = json.dumps({'temperature': device.temperature})

print('Publishing payload', payload)

client.publish(mqtt_telemetry_topic, payload, qos=1)

# Send events every second.

time.sleep(1)

client.disconnect()

client.loop_stop()

print('Finished loop successfully. Goodbye!')

Listing 1: This snippet from the Google sample application illustrates how the main routine periodically updates a Device class instance which stores the current value of the simulated temperature sensor and provides a method that updates that value depending on the state of the simulated fan. (Code source: Google)

In turn, a Server class instance provides a module that updates the fan state depending on the temperature data received from the Device class instance (Listing 2).

Copy

class Server(object):

"""Represents the state of the server."""

.

.

.

def _update_device_config(self, project_id, region, registry_id, device_id,

data):

"""Push the data to the given device as configuration."""

config_data = None

print('The device ({}) has a temperature '

'of: {}'.format(device_id, data['temperature']))

if data['temperature'] < 0:

# Turn off the fan.

config_data = {'fan_on': False}

print('Setting fan state for device', device_id, 'to off.')

elif data['temperature'] > 10:

# Turn on the fan

config_data = {'fan_on': True}

print('Setting fan state for device', device_id, 'to on.')

else:

# Temperature is OK, don't need to push a new config.

return

Listing 2: In this snippet from the Google sample application, the _update_device_config() method defined in the Server class provides the business logic for application, setting fan state to on when temperature rises above a defined value and setting fan state to off when it falls. (Code source: Google)

Besides Google's sample code, developers can find dozens of open-source IoT device, system and network simulators on repositories such as GitHub. For example, Microsoft's open-source Raspberry Pi system simulator code includes pre-built integration to the Azure IoT Hub for rapid development of cloud-based applications that interface with Raspberry Pi boards. In addition, low-code programming tools like Node-RED support pre-built modules (nodes) for feeding simulated sensor data to the leading cloud platform IoT service interfaces. Using these approaches, developers can easily generate a stream of sensor data.

Running simulations at scale

The difficulty with using device level simulators and related tools is that managing the data simulation can become a project in itself. To run the simulators, developers need to provision and maintain resources as with any application. Of greater concern, the device models used to generate realistic data become a separate project outside the IIoT application development process. As development proceeds, developers need to ensure that device models remain synchronized functionally with any changes in the definition of the IIoT device network and application. For enterprise-level IIoT applications, developers can find that scaling these simulations can be difficult at best, and even begin to draw on resources needed for developing the application.

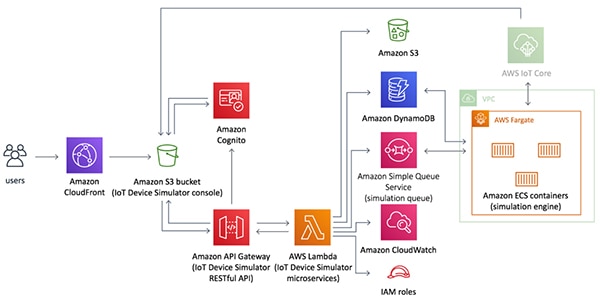

The major IoT cloud platform providers address these concerns with IoT device simulation solutions designed to scale as easily as other cloud resources in their respective platforms. For example, the AWS IoT Device Simulator provides an AWS template for its CloudFormation configuration service, which deploys a virtual private network connecting microservices implemented in containers running on the AWS Fargate serverless engine (Figure 5).

Figure 5: The AWS IoT Device Simulator combines multiple AWS services to deliver a scalable stream of device data to the same AWS IoT Core used by physical devices. (Image source: Amazon Web Services)

Figure 5: The AWS IoT Device Simulator combines multiple AWS services to deliver a scalable stream of device data to the same AWS IoT Core used by physical devices. (Image source: Amazon Web Services)

Developers access the simulation interactively through a graphical user interface (GUI) console running in the Amazon S3 service, or programmatically through the IoT Device Simulator application programming interface (API) generated by the CloudFormation template in the Amazon API Gateway service. During a simulation run, the IoT Device Simulator microservice pulls device configurations from the Amazon DynamoDB NoSQL database in accordance with an overall simulation plan described in its own configuration item.

The device configurations are JSON records that define the device attribute names (temperature, for example), range of values (-40 to 85, say), and update device interval and simulation duration, among other information. Developers can add device types interactively through the console or programmatically through the API. Using normal DevOps methods, the device types, configuration, and infrastructure can be quickly scaled to achieve the desired data update rates reaching the AWS IoT Core and downstream application.

In the Azure device simulator, developers can further supplement the basic list of attributes with a set of behaviors supported by the device during the simulation run, as well as a set of methods that the cloud application can call directly.

Digital twins

This kind of device data simulation is closely tied conceptually with digital twin capabilities emerging in commercial IoT cloud platforms. In contrast with device shadows that typically provide only a static representation of device state, digital twins extend a virtual device model to match physical device state as well as its behavior.

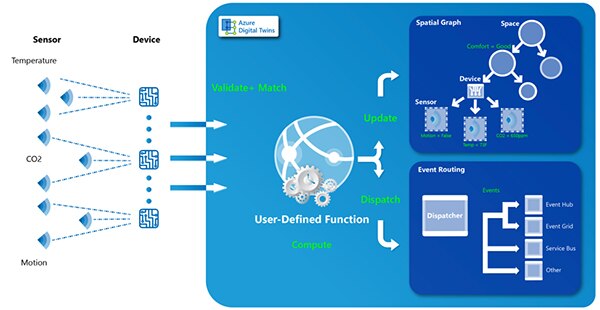

In Microsoft's Azure, the Azure Digital Twins service allows developers to include user-defined functions to define behavior during a device simulation, still feeding results to the Azure IoT Hub as before. Regardless of whether it's simulated or real, incoming data is then dispatched to an event routing service for further distribution in the application. Microsoft also uses the digital twin data to create spatial graphs that depict the interactions and state among elements in complex hierarchical environments like an industrial automation system comprising multiple networks (Figure 6).

Figure 6: The Microsoft Azure Digital Twins service lets developers build virtual devices that match their physical counterparts in features and capabilities and provide the foundation for sophisticated services like spatial graphs of complex IIoT hierarchies. (Image source: Microsoft)

Figure 6: The Microsoft Azure Digital Twins service lets developers build virtual devices that match their physical counterparts in features and capabilities and provide the foundation for sophisticated services like spatial graphs of complex IIoT hierarchies. (Image source: Microsoft)

For IIoT applications, digital twins can provide a powerful mechanism able to support the entire lifecycle of applications built around these capabilities. In early stages of development, digital twins can be driven at scale by the platform's device simulation services. As physical IIoT networks come online, those simulated data feeds to the digital twin can be replaced by device data feeds. Later, in a fully deployed IIoT application, developers can use any differences found between a physical device and its digital twin as additional input to predictive maintenance algorithms or security intrusion detectors, for example. Throughout the lifecycle, digital twins can shield the application from network outages or significant changes in configuration of IIoT device networks.

The emergence of digital twins in IoT platforms also provides a secondary benefit by offering a standardized approach for describing device model attributes and behaviors. For its description language, Microsoft's Azure Digital Twins service uses the JSON-LD (JavaScript Object Notation for Linked Data). Backed by the World Wide Web Consortium (W3C), JSON-LD provides a standard format for serializing linked data based on the industry standard JSON format, which is already in use in a number of other application segments.

Standardized digital twin descriptions can further accelerate development with the emergence of repositories of pre-built digital twin descriptions for sensors and actuators. For example, Bosch already provides open-source digital twin descriptions of several of its sensors written in the Eclipse Vorto language and published in the Eclipse Vorto repository. Using a grammar familiar to most programmers, the Eclipse Vorto language provides a simple method for describing models and interfaces for digital twins. Later, developers can convert their Vorto language descriptions to JSON-LD or other formats as needed.

Building out the IIoT application

Whether built with discrete simulators or microservice oriented platforms, device data simulation provides an effective software-based solution for accelerating application development. For IIoT applications using multiple device networks, migration of the device simulations to the edge can help further ease the transition to deployment without sacrificing the need for representative data early in application development.

Edge computing systems are playing an increasingly vital role in large-scale IoT applications. These systems provide local resources needed for emerging requirements ranging from basic data preprocessing to reduce the amount of data reaching the cloud, to advanced classification capabilities like machine learning inference models. Edge computing systems also play a more fundamental role as communication gateways between field-area device networks and high-speed backhaul networks.

Gateways such as the Multi-Tech Systems’ programmable MultiConnect Conduit family provide platforms that combine communications support with edge processing capabilities. The Multi-Tech MTCAP-915-001A for 915 megahertz (MHz) regions and the MTCAP-868-001A for 868 MHz regions provide LoRaWAN connectivity for aggregating field-area network device data, and Ethernet or 4G-LTE connectivity on the cloud side. Based on the open-source Multi-Tech Linux (mLinux) operating system, these platforms also provide a familiar development environment for running device simulations. As separate field networks come online with physical sensors and other devices, each unit can return to its role as a communications gateway, redirecting processing efforts to requirements like data preprocessing.

Conclusion

IIoT applications present significant challenges for deployment of sensor networks in the field and development of cloud-based application software able to transform sensor data into useful results. The mutual dependence of sensor networks and application software can cause development to flounder while sensor deployment and software implementation wait for each other to reach a sufficient level of critical mass.

As shown, developers can break this deadlock and accelerate IIoT application development by simulating data streams at realistic levels of volume, velocity and variety.

Disclaimer: The opinions, beliefs, and viewpoints expressed by the various authors and/or forum participants on this website do not necessarily reflect the opinions, beliefs, and viewpoints of DigiKey or official policies of DigiKey.